dayum

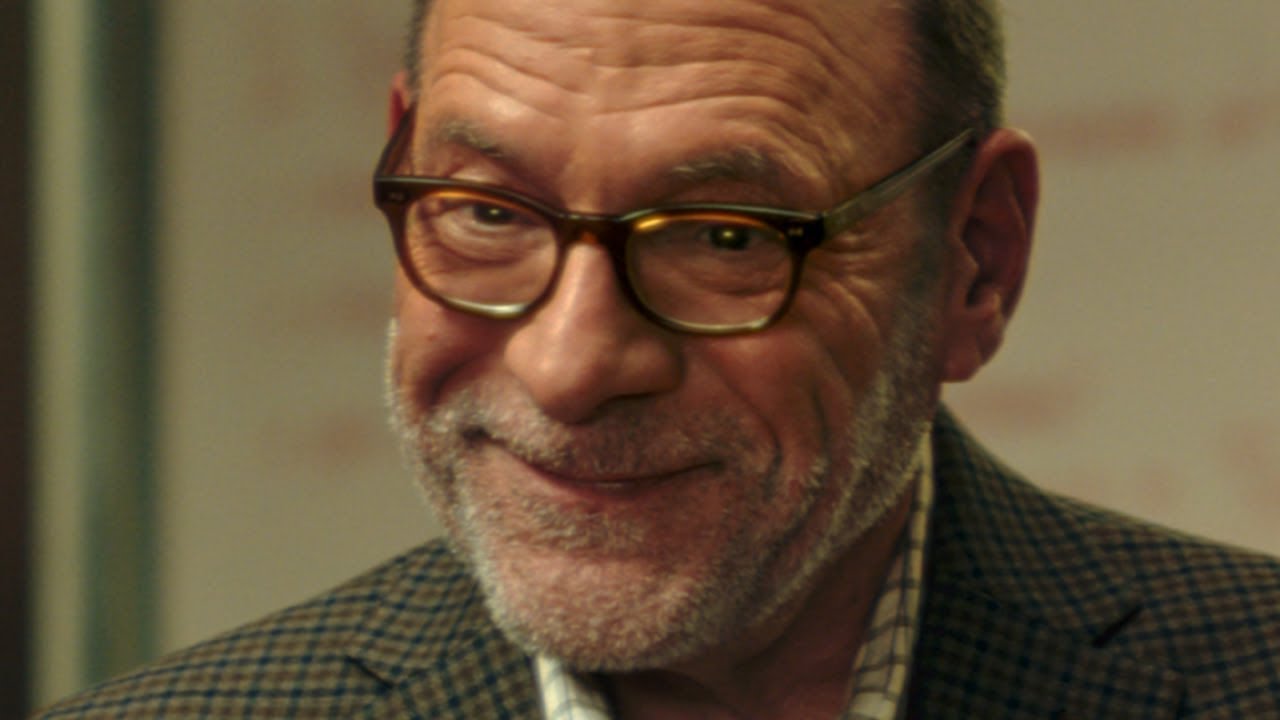

This satirical ad making fun of my company does not depict the situation in a completely accurate manner. Beep boop.

The other two

I think they’re running one of these ads during the Super Bowl

Like the thread title change although I have never used Claude.

I wanted it to be subtle. Plus, the nature of the LLM game, it will be back to OpenAI before too long, then Gemini, before it circles back to Claude, ad infinitum.

I seem to remember a fundamental law of economics being that firms make no profit in a competitive market.

I’m using Claude 80% of the time at work. At home I still use chat gpt as my first chatbot but Claude is way better for any sort of vibe working. Gemini is better than GPT as well but Claude has a huge context window and I’ve kind of figured out in my own work that getting AI to do a good job is like 80% getting it the right context and 20% the way you prompt it

Claude drops Opus 4.6 just now, an hour later OpenAi puts out gpt-5.3-codex

then we get this lets go

The investigation began in October, when someone reported to the National Park Service tip line an Instagram video of a man making the jump on Oct. 8, according to a criminal complaint. The video, posted to an account bearing Propeck’s name, pans to the man’s face as he deploys a parachute, it states.

A license plate reader detected Propeck’s car entering the national park on Oct. 7 and leaving Oct. 8, and photos showed Propeck behind the wheel, wearing the same purple mirrored sunglasses the man was seen wearing in the Instagram video, according to the complaint.

When a park ranger contacted Propeck, he denied he was the man in the video, saying he’d used artificial intelligence to superimpose his face onto the footage, the complaint states.

We are officially in the “It was AI” defense world.

I’ve been using agentic coding tools seriously for about a month, and it’s really an amazing difference, both in terms of raw productivity and the general vibes. Maybe this is just familiarity breeds contempt, but the chatbot interface is very passive and under control. There’s something that just hits different about telling the tool to make some changes to xyz.py, without really giving an adequate explanation of where xyz.py is or where it does, then watching it poke around your file system to find it, read it, and fill in the gaps in what you asked. If you imagine a future where you can chat with supersmart chatbots, that’s really great, but the impact is limited. If you extrapolate out what coding agents can do to humanoid robots or scientific research, it makes a lot more sense that companies are pouring hundreds of billions of dollars into this.

Also, the productivity boost is crazy. I still write a lot of code by hand, but about 50% of the time I’d otherwise think “Oh, we don’t have X, which would be nice, I’ll put it in the backlog,” my answer now is to just spin up an agent to implement X, and then it’s done with 15-20 minutes of effort on my part. Agents still aren’t writing code on par with human experts, so I’m still doing the hard stuff, but even then it’s a big boost to describe the task to an agent and have it create a first draft will all the boring infra/DB communication scaffolding in place, and then just work on the logic.

Yeah, fuck ChatGPT. I mean, I assume they’re all evil, but fuck ChatGPT.

I’m 100% Gemini for work and Claude for other stuff like shooting the shit.

shouldn’t that be xyz.rs for you?

Python for work these days, which is hilarious because I’m writing a high-throughput proxy server

AI not as popular in law. Some programming should probably treat AI more like law.

Law firms are still on Windows NT 4. They have no incentive to upgrade anything.

It’s being pushed hard by lexis/westlaw, etc., but people who write bad code don’t get sanctioned and potentially disbarred.

the thing that hits different for me is the security nightmare here and trying to control this at org level, otherwise, ive been doing the same though - like its totally a chainsaw. i know i can use it well but i cant trust some people will be as considerate of security concerns, and these things often miss there unless you tell them. i have a security sub agent thats been tattling on people. it’s just very clever, which is its strength and also weakness. It disobeys me so much in really clever ways in sandboxes, to the point i think sandboxing is the only way to architect around it to feel safe letting it run wild. unfortunately i dont have the luxury of trusting people just downloading moltbook and going buck wild and being comfortable with it - i’d feel ok doing that on my personal, but never a work device.

for me it is a game changer because much of my code toil and issue tracking (i used to have to

track 10+ things at once) plus automation around my updates and commit process are automated now and a huge part of the annoying part of my job and now i can just ship. i’ve shown to my org i am easily 3-5x, but, i dont think this applies to everyone and there is a steep learning curve because it is very new and fraught with peril. startup time is easy, maintenance is difficult in large code bases.

the exec level doesnt understand the implications of this stuff yet and are just plowing full steam ahead regardless of consequences. we are basically in web 1.0 era with this shit in terms of consequences and an unfortunate small part of my job is trying to predict how, it sucks, but it’s very addicting and fun to use. i’m literally working for fun now which i couldnt say even in the most exuberant days of my career.

the creepiest thing about wrangling these things is how often you have to treat them like a persona - like oh, this sub agents role is to be a complete asshole. this sub agents job is to merge code responsibly. this orchestrator is responsible for maintaining knowledge base.

it’s bathshit stuff to think about but is the new engineering i see. i’m running into hard cs compression problems already in two weeks being let loose org wise on these tools, which is not my expertise at all with what ive done, but suddenly people listen to me. it’s a cs nerd’s dream.

I’ve been warned not to go bullish on anthropic but its so far ahead of the others for what i do. openai models are really opinionated and less configurable. i only work out of my terminal + vim so this repl is incredible for me and taps into a weird expertise i have. im in heaven.

claude code is just a wrapper to opus but it is a truly impressive tool in terms of automation. we’ve entered a new era. I am the most pessimistic person ever about the usability of these tools for devs but this is a new thing. I cannot even compare it to anything else

It’s not even like this is a LOL AI. If, instead of ChatGPT, it was an intern or paralegal who cited fake case law because they couldn’t find the sources they needed; the news wouldn’t be that law firms should stop using interns. It would be that the lawyer overseeing these interns was as lazy as the interns who didn’t bother to find proper case law.

Sucks for this guy’s client but my take is lawyer sounds like a lazy bastard who would’ve botched the case in a different way with his laziness even if AI didn’t exist.

This is item #1 on my must learn list for 2026. I think multi agentic patterns have incredible potential, but I feel like I need to get hands on with it and just haven’t had the time to experiment and build any sort of prototype.